What It Takes for AI to Deliver Learning Gains: Insights from Implementers and Investors

Artificial intelligence, particularly generative AI, is rapidly entering the education sector, with growing interest from governments, foundations, investors, and technology developers alike. Across the ecosystem, organizations are experimenting with new tools designed to improve learning and teaching and expand access to education.

Yet, as AI solutions move from early experimentation toward classroom integration, deeper questions are emerging: What evidence matters when evaluating whether AI improves learning? And what conditions are required for responsible and sustainable adoption?

International organizations increasingly frame AI as both a transformative opportunity and a governance challenge for education systems. UNESCO has emphasized that AI has the potential to help address some of the world’s most pressing education challenges, including teacher shortages, learning gaps, and inequitable access to high-quality instruction, while also enabling more personalized learning and improved assessment tools. At the same time, UNESCO cautions that rapid technological advances are outpacing policy frameworks and institutional preparedness, underscoring the need for stronger guidance on ethics, data protection, and responsible use in classrooms.

Evidence from recent global surveys also suggests that adoption is already widespread. A 2025 UNESCO survey found that roughly two-thirds of higher education institutions worldwide have either developed or are in the process of developing formal guidance on AI use in teaching and learning, reflecting the speed with which institutions are responding to the rise of generative AI. Likewise, AI-enabled tools are increasingly being used in K-12 settings for adaptive tutoring, automated assessment, and learning analytics designed to personalize instruction and provide teachers with real-time insights on student progress. However, global readiness remains uneven: only a small number of countries have developed national AI curricula or guidance for schools.

Researchers and policymakers likewise note that the benefits of AI will depend heavily on system readiness, including equitable access to technology and teacher training. Without this, there is growing concern that AI could deepen existing inequities, particularly between high-income and low- and middle-income countries (LMICs), where infrastructure and language representation in AI systems remain limited.

Two perspectives in particular help illuminate these concerns: those developing AI-enabled tools and infrastructure, and those investing in the companies bringing these solutions to market.

To explore these perspectives, the Education Finance Network (EFN) spoke with two EFN members working at different points in the AI-for-education space: Romana Kropilova, Director of EdTech at Fab AI, whose organization conducts research and development to guide responsible implementation of AI-enabled learning tools, and Tetyana Astashkina, General Partner at LearnLaunch Fund + Accelerator, an investor supporting AI-driven education companies.

Across these conversations, we asked how these organizations are approaching the opportunities and complexities of AI in education, and what conditions they believe will be required for AI-enabled solutions to meaningfully improve learning outcomes.

To What Extent Can AI Address the Learning Crisis?

For some implementers working closely with education systems, the starting point for AI innovation is often the scale of the learning crisis itself. As Romana Kropilova explains, “Fab AI’s work is all about addressing the ongoing learning crisis in low- and middle-income countries, where learning outcomes remain very low. For example, in Sub-Saharan Africa, up to nine out of ten children are not able to read a simple text by the age of ten.”

In this context, Fab AI focuses on developing shared infrastructure and knowledge for the broader ecosystem. “We want to make sure that AI amplifies learning rather than deepens inequities… Instead of building a single product, our approach is to create public goods for developers and different actors in the education ecosystem, helping the field understand how to implement AI-enabled tools responsibly. We hope these goods can serve as practical blueprints for others, with clear documentation of what works, under which conditions, and which elements may need to be adapted in different contexts to lead to improved learning outcomes.”

From an investor’s perspective, there is a similar understanding that AI has the capability to reshape the broader education landscape. As Tetyana Astashkina explains, “At LearnLaunch Fund + Accelerator, we do not view AI as a single ‘problem area’ to invest in. Instead, it is a foundational capability reshaping the entire learning and workforce ecosystem. Our investment thesis spans the full continuum of education and skills development, supporting AI applications across a wide range of learning contexts.”

Taken together, these perspectives suggest that AI alone is unlikely to resolve the learning crisis, but it may reshape how education systems respond to it. Emerging evidence indicates that AI’s most immediate impact may come through augmenting the daily work of teachers and learners rather than replacing it. A 2025 Microsoft study and 2025 KPMG study suggest that students are using AI primarily to save time, improve the quality of their work, and support their understanding of new concepts, while educators are using it to generate lesson materials, adapt instruction, and manage administrative tasks more efficiently.

These early uses could point to a more incremental but potentially powerful role for AI: helping teachers focus more time on instruction, enabling more personalized feedback for students, and strengthening the everyday processes through which learning happens. In this sense, as Kropilova and Astashkina expressed, AI may not be a single solution to the learning crisis, but part of a broader shift toward tools that expand the capacity of educators and education systems to support learning at scale.

What Evidence Matters for AI in Education?

As AI tools proliferate, both implementers and investors emphasize the importance of evidence. But what counts as meaningful evidence can vary depending on where organizations sit within the ecosystem.

A 2025 UNESCO report stresses that evaluation frameworks and meaningful evidence should connect AI functionality and algorithm performance to observable improvements in student learning outcomes. A 2025 World Bank report also suggests that teacher time savings and instructional quality are becoming important complementary indicators alongside impact on learning.

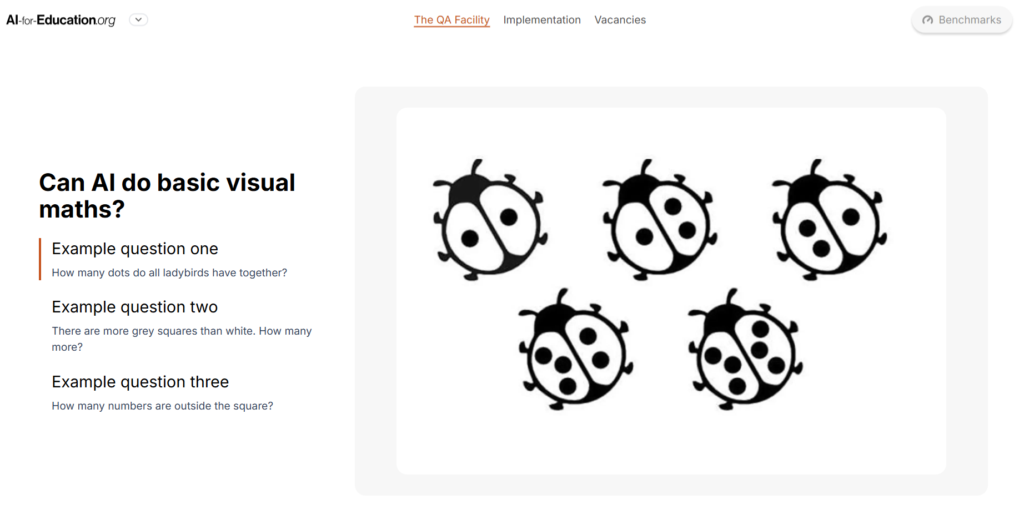

For Fab AI, improving measurement is a critical first step, also known as “quality assurance.” As Kropilova shares, “What does not get measured does not get improved. That is why looking into what AI-enabled tools can and cannot do well is crucial. One concrete example from our work is the development of benchmarks, putting the different AI models to test in areas relevant for foundational learning. We do it not to showcase where models struggle but to help discover gaps that need to be addressed.” An example of the kinds of questions AI should be able to answer, from one of Fab AI’s visual reasoning and maths benchmarks being developed, is depicted below:

Global education reports similarly express that foundational literacy and numeracy gains continue to be a significant outcome indicator in LMICs for early childhood and primary education. AI-enabled assessment and tutoring tools are increasingly evaluated based on whether they improve fluency and comprehension.

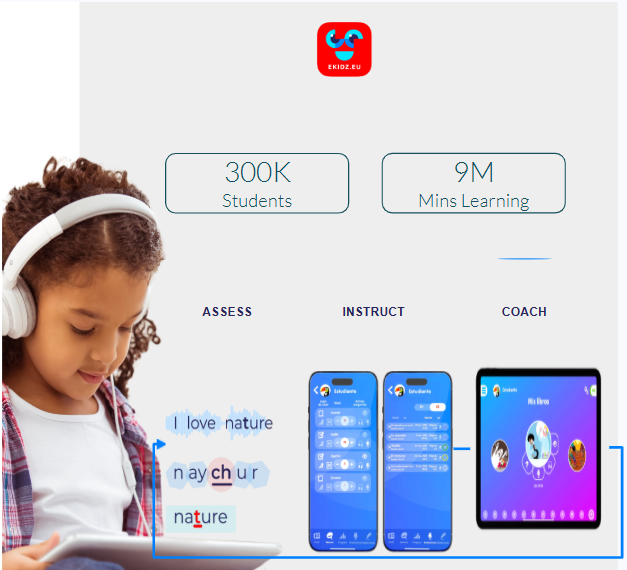

In addition to learning outcomes, investors approach measurement through the lens of potential impact and market readiness. Astashkina points to LearnLaunch’s investment in the literacy assessment company eKidz, which supports children as they develop reading skills across multiple languages, providing teachers with personalized data insights for each student to improve instruction. Importantly, “automatic speech recognition (ASR) is not a new thing, but it is much harder to get it right for children’s voices.”

The company’s advanced ASR technology stood out because of its performance, as depicted below. “Amongst other things, eKidz demonstrated higher accuracy than what was on the market” and potential to impact teacher’s efficiency by automating assessment, as well as improving assessor’s confidence, real-time instruction, and agency in reading and writing. After using eKidz, students could understand texts that were 10-12% more complex.

Investors may also be looking closely for indicators that solutions can scale effectively. As Astashkina notes, “The next step for eKidz is to prove larger scale roll-out capability,” which could entail making new partnerships for broader adoption while ensuring its technology “maintains its edge” as AI tools evolve rapidly.

Therefore, from model performance and safeguards to real improvements in teaching and learning and evidence of sustainable scale across markets while maintaining competitiveness, there are various, evolving ways to measure the impact of AI on education. Rather than converging on a single metric,collaborating across actors to develop holistic evaluation frameworks can help ensure the best AI tools reach the students most in need.

The Challenges of Classroom Implementation

As AI tools move from experimentation to everyday use in schools, a new set of implementation challenges is emerging, which extend beyond model performance into questions of equity, governance, trust, and ultimately, responsible financing.

A 2025 Microsoft study and 2026 OECD report found that educators are already using generative AI to help create lesson materials, improve feedback, and streamline administrative tasks. Yet the same Microsoft study highlights persistent concerns about plagiarism, misinformation, overreliance on AI, and the lack of training for teachers tasked with navigating these tools in classrooms. Administrators and school leaders are particularly focused on ethical concerns, resource constraints, and how to ensure equitable access to AI-enabled tools across schools and student populations.

Evidence from other recent surveys reinforces the complexity of this transition. A 2025 study by Carnegie Learning found that educator use of AI has roughly doubled over the past year, with various tools in use on the market. However, administrators reported using AI more frequently than teachers in the survey. Attitudes toward student use of AI may also be shifting. Approximately 59% of educators reported being somewhat or very comfortable with students using AI tools, even as concerns about privacy remain widespread, with 22% of educators reporting they are very concerned about AI-related privacy issues and 54% somewhat concerned. These findings suggest that while educators are increasingly open to experimentation, questions about governance, training, and responsible use remain central to implementation.

For Fab AI, balancing innovation with responsible data governance is indeed a top priority, particularly when working with children’s data. In Fab AI’s experience developing ASR models for early grade reading assessment, similar to eKidz, Kropilova explains, “it is the balance… between innovation and assuring ownership and data protection when working with children’s data,” such as children’s recordings to train ASR models, that we have to keep.

This tension is particularly visible in efforts to build datasets for languages that are currently underrepresented in AI systems. “Building new datasets for low-resource languages is crucial for AI to not widen the inequities,” Kropilova states.

At the same time, regulatory constraints around data protection can make collaboration more difficult. “Today’s personal data protection regulations do not allow for an easy way to share the voice data with other organizations to use for additional model training and research… Yet, we also believe that initiatives such as the Global Alliance for Learning Innovation (GAILA) managed by Dalberg… can help advance solutions.”

From an investor’s perspective, there may also be concerns about whether these technologies will sustain in the long run. Astashkina points out that the education sector itself often moves slowly when adopting new technologies. “Successful classroom adoption requires seamless integration with existing instruction, teacher training, and curriculum alignment – challenges as slow-moving and massive as tectonic plates.”

Investors, therefore, may likely need to see technology developers and implementers engage more effectively and intentionally with local schools and governments to streamline adoption, pointing to the need for partnerships and collaboration across the education ecosystem.

What It Takes to Scale: Partnerships and Shared Infrastructure

In practice, many of the most promising initiatives are built through collaborations between technology developers, governments, research institutions, and philanthropy. For example, the Global Alliance for Learning Innovation (GAILA), coordinated by Dalberg with partners UNICEF and the Gates Foundation, is working to support responsible development and scaling of AI-enabled education solutions by strengthening shared infrastructure, research, and cross-sector collaboration. At the national level, partnerships between AI and education authorities are also becoming increasingly common.

For Fab AI, collaboration is foundational to the organization’s approach. As Kropilova shares, “The learning crisis cannot be addressed by any organization alone.” This philosophy is reflected in Fab AI’s commitment to creating public tools and infrastructure and sharing learnings from their research “for others to build on.” Analogously, Fab AI assesses uptake of its work “by the key stakeholders [they] are trying to reach,” as well as whether its “benchmarks, tools and research are used to inform product development, policy discussions, or further research.”

Astashkina adds on, noting that “partnerships with foundations, governments or research organizations can help de-risk adoption, test new markets and provide credibility.” With respect to LearnLaunch’s investment in eKidz, “A recent pilot [carried out with Fundación Luker, another EFN member organization] in Colombia has shown that the model can scale internationally. In the US, eKidz is pursuing partnerships with LeanLab Education and UPenn focussed on feasibility and implementation across eKidz pilots. ” Such localized partnerships with organizations to co-lead and validate pilots in the contexts in which these technologies would like to be adopted are arguably key to demonstrating impact and potential to scale.

Public-private partnerships (PPPs) are thus critical and important as AI-for-education progresses, including promising models such as outcomes-based financing (OBF), data collaboratives, and cross-sector alliances linking education with workforce needs to help ensure the focus remains on learning gains and serves learners equitably and sustainably.

Looking Ahead: Implications for the EduFinance Community

As Fab AI and LearnLaunch’s experiences suggest, the future of AI in education will depend on partnerships across education, finance, and local government actors that allow these innovations to be implemented responsibly at scale. To the EduFinance community, there is a call to:

- Invest in evidence, not just innovation. Prioritize rigorous, holistic evaluation of AI tools, focusing on learning outcomes, teacher effectiveness, and real classroom impact.

- Build partnerships that streamline and scale solutions. Local governments, researchers, investors, and implementers must collaborate to develop shared data infrastructure, governance frameworks, and pilot platforms.

- Finance responsible adoption of AI. Mobilize catalytic capital, blended finance, and outcomes-based funding to support experimentation while ensuring equitable and sustainable deployment in education systems.

The education finance community ultimately has a critical role to play in aligning capital, evidence, and partnerships to ensure AI innovations move beyond pilots to responsibly improve learning outcomes at scale.

Astashkina emphasizes keeping a broad approach to AI, “recognizing that many of the most important markets are only now beginning to emerge,” and keeping the focus on outcomes, such as “strengthening the connection between education and work, and creating more personalized and effective pathways for learners.” With this, AI “has the potential to advance these goals far beyond what was previously possible.”

This blog was authored by Sarah Deonarain, Program Associate at the Education Finance Network (EFN), with contributions from Romana Kropilova, Director of EdTech at Fab AI, and Tetyana Astashkina, General Partner at LearnLaunch Fund + Accelerator.

Disclaimer: This article reflects insights and personal opinions shared in virtual interviews with the contributors.